Purpose

The intention of this page is to present experiments with non-CRUD data operations.

Aggregation Operation

An aggregation operations adds up the values of a number of objects. When executing such an operation in RAMCloud three questions, among others, are of interest:

- Where to execute the aggregation operation (client or server side)?

- How to describe the range of objects which should be included in the operation?

- How to interpret the objects themselves?

The experiments below are centered around the question about where to execute the operation. Three different scenarios are implemented:

- Client-side aggregation: The client-side aggregation is implemented in a way that a client requests a number of objects one by one where each object contains one integer value. Consequently, a read-RPC gets invoked for every object and the client locally computes the sum.

- Server-side aggregation via hashtable lookup: A range of keys is passed to the server and the server performs a lookup in its own hash table for every object. Again, each object contains a single integer which gets added up (as shown in Listing 1). Once the aggregation is done, the resulting sum is sent back to the server via RPC.

- Server-side aggregation via hashTable forEach: The hash table in the MasterServer offers a forEach method that iterates over all objects contained in the hash table. A callback can be registered to that method which is shown in Listing 2.

- Server-side aggregation via Log traversal: In a MasterServer in RAMCloud, the actual objects are stored in a log. In this experiment, the complete Log is traversed without using the hash table at all (as shown in Listing 3).

for(uint64_t i = 0; i < range; ++i)

{

LogEntryHandle handle = objectMap.lookup(tableId, i);

const Object* obj = handle->userData<Object>();

int *p;

p = (int*) obj->data;

sum += (uint64_t)*p;

}

/**

* Aggregation Callback

*/

void

aggregateCallback(LogEntryHandle handle, uint8_t type,

void *cookie)

{

const Object* obj = handle->userData<Object>();

MasterServer *server = reinterpret_cast<MasterServer*>(cookie);

int *p;

p = (int*) obj->data;

server->sum += (uint64_t)*p;

}

//**

* Aggregation via traversing all SegmentEntries throughout the entire Log

*/

uint64_t

Log::aggregate()

{

uint64_t sum = 0;

//Iterate over all Segments in the Log

foreach (ActiveIdMap::value_type& idSegmentPair, activeIdMap) {

Segment* segment = idSegmentPair.second;

//Iterate over all SegmentEntries in a Segment

for (SegmentIterator i(segment); !i.isDone(); i.next()) {

SegmentEntryHandle seh = i.getHandle();

//Check that it is an Object

if (seh->type()==560620143) {

const Object* obj = seh->userData<Object>();

int *p;

p = (int*) obj->data;

sum += *p;

}

}

}

return sum;

}

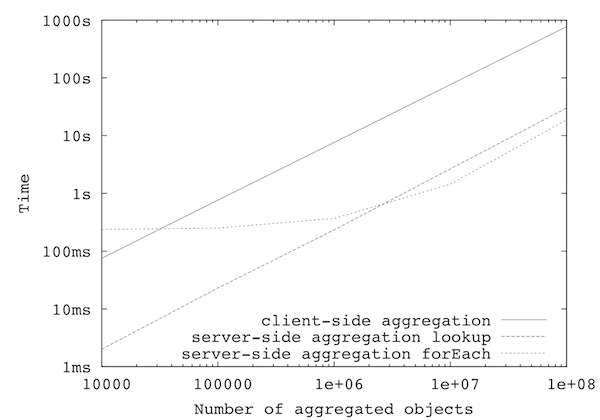

Benchmarking

The benchmarks below have been executed using separate machines (out of the Stanford RAMCloud cluster) for client and server which are connected via Infiniband. After each run, the equality of the client-side and server-side calculated sum has been checked. This particular benchmark allows the following conclusions:

- By executing the aggregation on the server-side a performance improvement up to a factor 50 can be seen.

- When traversing a set of distinct objects, retrieving a single object takes about 7-8?s (or a RAMCloud client can request about 130.000 objects/sec from a single RAMCloud server).

- When invoking the hashTable forEach method the whole allocated memory for the hashtable has to be traversed. This is fine if the hashtable is densely packed with objects. In case of a sparse population with objects (as in the examples below, where the hashtable had a size of ~2.5GB and some experiments stored only a few objects) this introduces a penalty.

- When traversing large amounts of objects the forEach method is 1.6-1.8x faster that looking up each individual object.

#number of objects |

client-side aggregation |

server-side aggregation |

server-side aggregation |

server-side aggregation |

|---|---|---|---|---|

10.000 |

63 ms |

2 ms |

238 ms |

6 ms |

100.000 |

648 ms |

23 ms |

251 ms |

9 ms |

1.000.000 |

6485 ms |

233 ms |

369 ms |

21 ms |

10.000.000 |

64258 ms |

2662 ms |

1444 ms |

142 ms |

100.000.000 |

652201 ms |

30049 ms |

18752 ms |

1422 ms |