Transaction_satoshi

I-1. Objective)

Providing multi-object (database) transaction with ACID properties:

- Atomic

- Consistent

- Isolated

- Durable

I-2. CAP Theorem)

- Consistency

- Availability

- Partition tolerance

- Relaxation of availability) No data replication for concurrent service. System is temporally unavailable before node recovery.

- Relaxation of partition tolerance) when the network is partitioned, the partition isolated from coordinator can not continue service.

II. Current Proposal)

II-1. Basic Strategy)

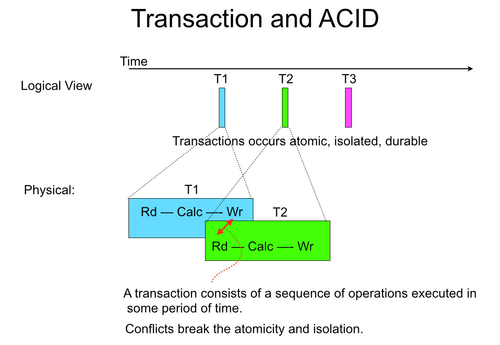

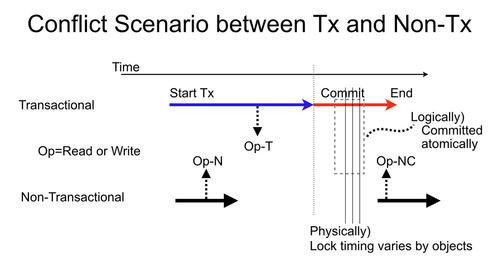

Figure II-1a

Each transaction consists of a sequence of operations and executed in some period of time as seen in Figure II-1a.

To achieve ACID, there are two strategies:

- Pessimistic lock - allow only one transaction to be executed.

- Pros) Simple, No re-execution overhead by conflict

- Cons) Small parallelism

- Optimistic lock - executes multiple transaction at one time with watching conflicts. If conflict occurs, cancel conflicting transaction and rewind its side-effects.

- Pros) Large parallelism

- Cons) Re-execution overhead with conflict

In traditional database or transaction, the probability of conflict is considered very low.

The most systems take optimistic lock approach. Some system combines pessimistic lock to improve the efficiency.

We will apply only optimistic approach.

II-2. Memory Renaming)

In order to efficiency of parallel execution, we apply the technique called 'memory renaming' widely used in parallel processing such as out of order super scalar processors. Memory renaming is the technique similar to register renaming: http://en.wikipedia.org/wiki/Register_renaming .

Similar technique is used in transactional memory http://en.wikipedia.org/wiki/Transactional_memory

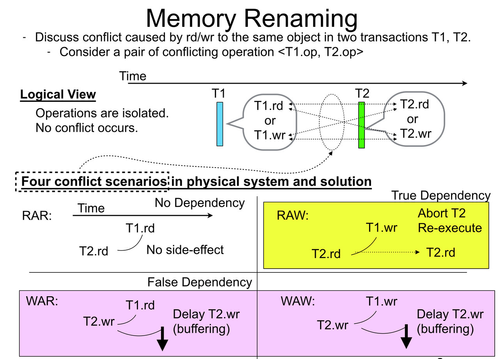

Figure II-2a

Considers (RAR hazard: Read after Read) is harmless.

- True dependency (RAW: Read after Write) is detected and resolved by canceling affected transactions and re-execute them.

- All other cases (WAR: Write after Read, WAW: Write after Write) are false dependency and resolved by the renaming (buffering and writing later).

II-3. Programming model)

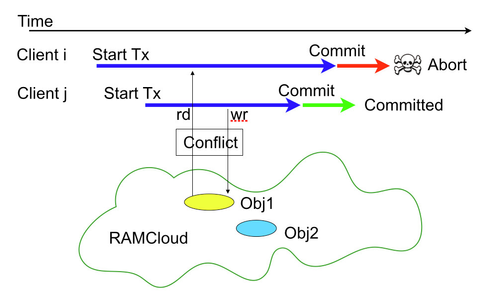

Figure. II-3a : Conflict of two Transactions

- Define a commit API for a transaction:

- A commit is a bundle of multiple conditional and non-conditional operations executed with ACID property.

- API is similar to multiOp.

- Advantage)

- Simple:

- Blue arrows in Figure II-3a (from Start Tx to Commit) is written as a user code which:

- Takes care of transaction retry caused by nacked (aborted) commit.

- Saves version numbers and un-committed objects and passes them to the commit API for the condition check

- Returns a un-committed written object for a local read. (local RAW resolution)

- Blue arrows in Figure II-3a (from Start Tx to Commit) is written as a user code which:

- Flexible to extending to:

- Multiple transaction can be reside concurrently on a single client application

- A transaction involving multiple clients can be written by exchanging the condition with the clients

- Other transaction APIs:

- SQL like primitives

- Transactional memory like model: Define the life of a transaction with start transaction and commit. Library record versions and temporal data implicitly.

- Simple:

- Commit API:

- Basic Idea)

- Defines a combination of operations 'compare, read, write' for each object

- Handles objects which are much larger than several bytes. Data validation is done with version number not with contents.

- Data needs to be loaded and used before commit. Then the version number is given to the commit.

- Table II-3-b shows the operations.

- Implementation

- Basically extending the existing multiOp context.

- Parameters of request: <requestId, commitObjects>

- requestId : <clientId, sequentialNumber> (Guarantee linearizability. See liniarizable+RPC)

- commitObjects (To Revise) : a list of <operation, tableId, key, [condition], [blob]> where:

- operation is ether : check, overWrite, conditionalWrite

- key is a primary key of the object.

- [condition] is a version number to be compared for check or conditionalWrite.

- [blob] is a write data for overWrite or conditional write

- First commitObject is specially handled as 'anchor object'.

- To be extended for secondary index.

- response contains:

- Ack (commit successfully) or Nack (aborted)

- List of new version numbers for writes

- Basic Idea)

- Note)

- No priority is given by the start order of transactions (aka. age of transaction) such as Sinfonia [SOSP2007]

- Solution to avoid conflict to a long life transaction.

- Split the transaction into multiple mini-transactions

- Write a intermediate layer to prioritize transactions considering their life or start time

- Issues)

- Which operations remain when the transaction is implemented in RAMCloud?

- How much can we make its implementation simpler with:

- assuming all the data fits in a RPC

- assuming all the data fits in the same segment

| Operation | Conditional? | Parameter for the Entry | Comment | |

| Request | Reply | |||

|---|---|---|---|---|

| Compare | Yes | version | None | Check if the object is unmodified |

| Conditional write | Yes | version, blob | newVersion | Write if the object is still in the version |

| Conditional remove | Yes | version | None | Remove if the object is still in the version |

| Create | Yes | blob | newVersion | Create if the object is not exist (Can use ConditionalWrite with version=0) (See Note below for its use case) |

| Uc Overwrite | No | blob | newVersion | Unconditionally write when commit succeeds. |

| Uc Remove | No | None | None | Unconditionally remove when commit succeeds. |

Note1) We extend MultiOp to make sure our implement strategy and measure performance. However, since current MultiOp lacks linearizability we may create different rpc.

Note2) Usecase of 'Create' :

- Read an object before commit

- The read returns the object does not exist

- Create the object locally on the client

- Commit with the condition if the object does not still exist on the master.

II-4. Design Outline)

Flow and log structure are modified in first trial implementation, see 'IV) First Trial Implementation' instead and skip this section.

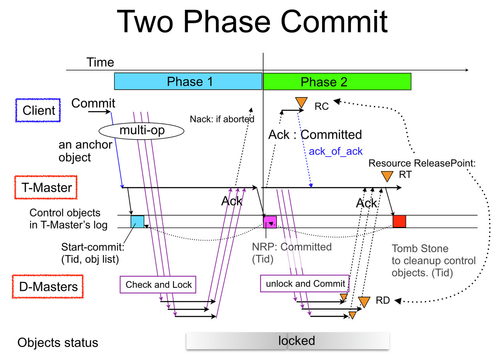

Figure II-4-1a

- 1.5 RTT and two log write commit (Figure II-4-1a )

- One master takes care of commit control as a "Transaction-master" while it works as object storage, the rests "Data-masters" work as ordinal data storage.

- The receiver of anchor object (the first object) in the multi-write of commit operation is defined as Transaction-master.

- Transaction-master receives the followings:

- List of keys the transaction referred: both written and read objects

- Key-hash of the anchor object which specify transaction-master (the master itself)

- Written objects for the master: list of (key, read-version, new version, blob)

- Keys of read objects for the master: list of (key, read-version)

- Data-master receives

- Key-hash of the anchor object which specify transaction-master

- Written objects for the master: list of (key, read-version, new version, blob)

- Keys of read objects for the master: list of (key, read-version)

- In both cases, no read proceeded to the write, special value 'no-read-proceeded' is given as the read-version.

- Transaction-master receives the followings:

- Transaction master (T-Master) coordinates the durable commit operation with writing Non-return-point in its log and backups.

- D-Master checks and lock the objects as follows:

- Loop while objects exists:

- If the object is already locked by other transaction or the version mismatches for a conditional operation:

- It sends nack to T-Master

- It cleans up all the locks for the transaction

- exit

- Otherwise lock the object

- If the object is already locked by other transaction or the version mismatches for a conditional operation:

- Send ack of the transaction to T-Master. Ack contains Tid.

- Loop while objects exists:

- T-Master receives an acknowledge (ack) from D-Master

- In Phase1

- The transaction aborts if T-Master receives any nack.

- If T-Master receives ack from all D-Master, it proceeds Phase2.

- In Phase2

- All D-Masters should eventually send ack. Ack tells phase2 operation is completed on the D-Master so that T-Master prepares for the resource clean-up.

- In Phase1

- If the T-Master crashes during commit operation, the recovery master which stores the anchor object takes over the T-Master's role.

So Non-return-point should have the same hash as the anchor object's one. - T-Master is responsible for the lock clean-up at the commit or abort.

- The node where T-Master resides works as a D-Master for the objects assigned to it.

- D-Master checks and lock the objects as follows:

- Data structure in log and log cleaning

- A object is attributed as follows:

- During commit operation)

- unlocked (before locking)

- locked for committed object or temporal object for transactional update.

- After commit operation)

- unlocked

- removed

- During commit operation)

- The lock modifier contains Tid which consists of <clientId, anchorObjectId>.

- We can identify T-Master from anchorObjectId.

- If some clients access to a object and it remains locked even after some retry.

The D-master need to request T-Master to clean up lock, ie. complete the transaction with abort or commit. - Log structure is in section II-2-6.

- A object is attributed as follows:

- T-Master (transaction master) uses three log entries for a durable commit operation

- Start-commit: specifies from whom T-Master gets phase1 ack.

- NRP: specifies the operation is committed.

- Tomb stone for deleting control objects (Start-commit, NRP) for a completed transaction.

- Behavior in accessing locked objects is discussed in section II-2-3 and II-2-4.

- Suspend returning response before the lock is released assuming:

- Defines phase1 timeout :

- If T-Master phase1-timeouts before all acks arrive, it aborts the transaction. Then T-Master eventually release all the locks.

- Defines phase2 timeout:

- If phase1 is successful, all the locks are eventually released in phase2.

- If T-Master phase2-timeouts before all the phase2 acks are returned,

T-Master requests all unacked D-Masters for the completion of Phase2 (ie. unlock).

- According above i) and ii), all the locks are released before phase1-timeout + phase2-timeout + some retry time

- Defines phase1 timeout :

- Suspend returning response before the lock is released assuming:

- T-Master returns ack (committed) to the client right after successful NRP write. This is not too early because:

- Eventually commit phase2 is completed in all D-Masters as described in 3-a-iii).

- There might be a case where:

- The client that receives ack as a commit completion tries to access the object which supposed to be release the commit. However, it still remains locked.

- It is harmless, client can just wait or retry because the lock is released eventually.

- Resource Release:

- Successful commit: (See Figure II-4-1a )

- Resource release point: Client - RC, T-Master - RT, D-Master - RD

- T-Master release control objects in log at RT

- Insert Tomb stone for the Tid

- Normal case: T-Master wait all acks from D-Master

- Corner cases: T-Master timeouts before it receives all asks from D-Masters. T-Master interrogates un-acked D-Masters for the completion to insert tomb stone.

- Insert Tomb stone for the Tid

- Abort:

- Any crash without NRP:

- Control structures are located in memory. Resources are automatically released

- Abort (Nack) :

- T-Master: Any Nack from D-Master

- Client: Nack from T-Master

- Any crash without NRP:

- Issues:

- Infinite chain of acks

- To reach RT, acks from the client and all D-Masters are needed.

- Can not remove RC and RDs until making sure all the acks are delivered to T-Master.

- That requires infinite chain of acks such as ack_of_ack, ack_of_ack_of_ack,....

- Solution

- RD: It is idempotent. Harmless for re-execution by repeated request from T-Master.

- RC: Similar to the solution for linearizable RPC.

- Releases the transaction data by receiving ack or nack(abort), moves to committing or aborting state, and sends ack_of_ack to T-Master.

- Largest ack_of_ack number is piggy backed on an ack of later commit.

- RT: retries until all the acks arrive from all D-Masters and Client.

- Interrogate liveness of the client to coordinator

- Infinite chain of acks

- Successful commit: (See Figure II-4-1a )

II-4-2. Conflicting commits to a locked object by a preceding commit)

See II-2. Memory Renaming

- Real conflict is RAW (True dependency): transactions reading write-locked objects should be aborted and re-executed.

- Pseudo conflicts are WAR, WAW (False dependency): writes in the conflicting transaction just corrupts locked object. The write needs to be delayed until the lock is released.

- We can detect WAW (or WAR) and re-execute conflicting transaction by:

- Preceding transaction reads the object before writes it to make WAW treated as RAW.

- We can detect WAW (or WAR) and re-execute conflicting transaction by:

- RAR: We consider read - read conflict is harmless. The object is not locked for the upcoming read access.

- If we want to detect RAR to Object O1, we can provide anther Object O2 and detect the conflict as RAW to O2 as follows:

- Definition of symbols:

- Suppose a group of transactions Tn want to detect RAR conflict to O1

Suppose Ti, Tj, and Tk belong to Tn want to read O1.

- Suppose a group of transactions Tn want to detect RAR conflict to O1

- Sequence:

- Provides O2 before any Tn starts.

- Any of Tn reads O2 before it starts O1 access.

- Ti writes O2 before it reads O1

- Tj, Tk are aborted by RAW conflict if Ti successfully commits.

- Definition of symbols:

- If we want to detect RAR to Object O1, we can provide anther Object O2 and detect the conflict as RAW to O2 as follows:

| Operation in another (newer) transaction's commit | Status of locked object | |

| read | written | |

|---|---|---|

| read | continue (not locked) | abort (True dependency, RAW) |

| write | block (wait: WAR) | block (wait: WAW) |

II-4-3. Effect of crashes)

Table II-4-3a

| Comments | Effect to the Transaction by Crash of: | |||

|---|---|---|---|---|

| Client | T-Master | D-Master | ||

| Before Commit | Data stored in Client code | Abort | No-effect | No-effect |

| Commit Phase1 | Ack table stored in T-Master's memory. After the all the commit requests have arrived to both T-Master and D-Masters, crash of any client has no effect to commit operation. | Abort or No-effect | Abort | No-effect |

| After Phase2 | Status is durable in T-Master's log | No-effect | No-effect | No-effect |

II-4-4. Log Structure on Masters)

- Inserts object modifier in log to lock and unlock the object during commit operation

- Chain of modifiers are created by sequence of transaction (commit)

- Cleanup: associate lock and unlock with transaction id and cleanup (now investigating log cleaner for the modification).

- Some of them are terminated with tomb stone.

- Chain of modifiers are created by sequence of transaction (commit)

- Object modifier example in log

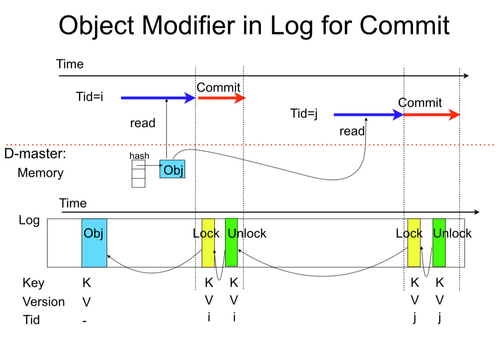

- Figure II-4-4a: sequence of transactions read the same object

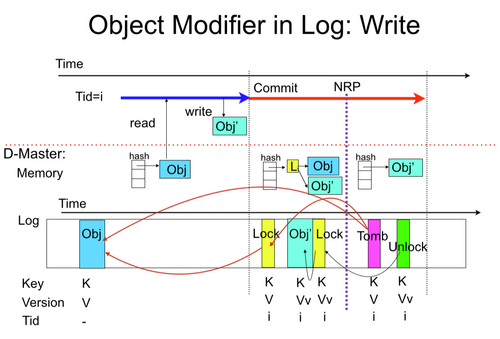

- Figure II-4-4b: modifier objects for object write at successful commit:

- both old object and newer (transactional temporal) object are locked at the same time. Log objects might be optimized such as "temporal object for write + lock", etc

- See section IV for the implementation.

Figure II-4-4a (Consecutive Read Lock by transactions)

Figure II-4-4b (Object Modifiers for conditionalWrite)

III. Corner Cases)

- Linearizablity issue: Ether commit request, nack, or ack is undelivered.

- (Resolved) Partial data loss in commit request with multi-op.

- Solution) Retry original request with timeout for ack(committed)/nack(aborted). See section IV-2-2.

- (Alternative method) Extending linearizable operations to multi-op using multiple RPCs

- Automatic retries for un-delivered RPCs?

- (Almost resolved. See section II-4-1 item5) Resource management (GC) and racing

- TransactionId

- Control objects in T-Master's log

- Lock/unlock objects in D-Masters' log conflicting with cleaning and object migration

- (Resolved) Partial data loss in commit request with multi-op.

- (Resolved) Corner cases during crash behavior transition

- Transition is: no-effect - abort (Volatile) - commit (NRP)

- Solution)

- Client's state is always volatile. If client crashes, masters clean up resource with inquiring expired clientId lease information on coordinator.

- On T-Master, all the information is volatile and lost by its crash before NRP is written.

- The recovery master for the T-Master, which receives the anchor object, starts aborting the transaction because D-Master list (See Figure IV-3b) is lost.

- If NRP is found on the recovered T-Master, the T-Master continues commit.

- D-Master decides its behavior by inquiring the transaction's state to T-Master after recovery.

- Transaction id of a locked object is found in the lock.

- (Resolved) Arrival delay of the anchor object to T-Master

- ack arrives from D-Master before the anchor object arrives to T-Master

- T-Master gets the Tid in ack and allocate the transaction's control structure. See section IV-4-2, item 1-c.

- ack arrives from D-Master before the anchor object arrives to T-Master

IV) First Trial Implementation

We will implement a simple trial RAMCloud with 'muti-object transaction'. We are going to evaluate the performance of our first implementation with micro-benchmark and some sample applications and estimate the improvement by different implementations.

IV-1) Overall design

IV-1-1) Transaction Id

- TransactionId = <ClientId, sequentialNo>

- TM location is hashed by ClientId.

IV-1-2) Data and control flow with RPC.

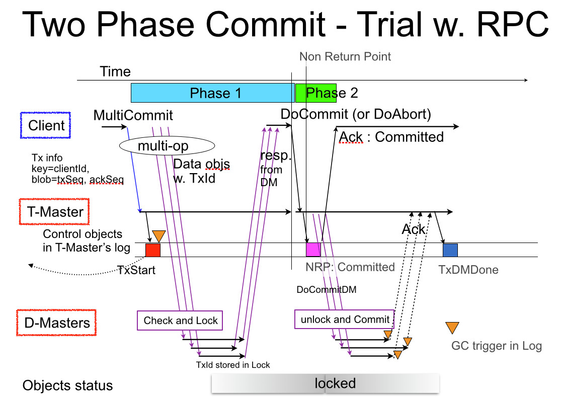

Figure IV-1-2a

IV-1-2-1) Initiation by client

- MultiCommit

- Sending startTx to TM

- Piggy backs TxDone information

- The biggest sequence number in the completed (acked or aborted) transaction is notified to transaction master for the client by piggy backing it with a MultiCommit request

- Piggy backs TxDone information

- Sending Operation list to Data master

- Including TxId

- Sending startTx to TM

- DoCommit / DoAbort

- Including TxId, primary keys of objects in the transaction

IV-1-2-2) Initiation by Transaction Master

- DoCommitDM

- unlock and commit

- DoAbortDM

- unlock and abort

IV-1-2-3) False dependency

False dependency 'WAR' and 'WAR' described in 'Figure II-2a' could be treated differently with blocking (holding) the lost transaction 'Section : II-4-2. Conflicting commits to a locked object by a preceding commit)' to reduce abort and retry overhead.

In out first trial implementation, we treat them as conflict and abort the lost transaction. We are going to make an evaluation with micro-benchmark and some sample applications to estimate the improvement by the complex implementation.

IV-1-2-4) Transaction Abort

- A client sends DoAbort request to TM if any of MultiCommit returns nack by:

- the object is locked by different transaction (different transaction is proceeding phase1 of commit with the object).

- condition check (version check) for the object failed

- Transaction Master (TM) sends DoAbortDM to DM to abort transaction and unlock object. The operation is triggered by:

- DoAbort request from Client

- Transactions without NRP at TM recovery (Tx start log entry needed in TM log.)

- Client lease expiration

- Timeout for DoCommit request from Client (Tx start is recorded in memory, because pending transaction without NRP is aborted by TM recovery (See b. above).

- Data Master (DM) aborts transaction in the following cases

- DoAbortDM request from TM

- No NRP found inTM for the transaction at Data Master recovery. If TM recovery is going on, DM needs to retry after TM recovery.

- Client crash: client crash during phase1 may leave object locked. The lock prevent object access from other clients like a master crash. It should by released within a few second to make the system available as follows:

- TM is notified client lease expiration from coordinator. Since TM for the transaction is hashed by clientId, coordinator easily decide the TM to notify. (May take a while)

- Errorneous client codes: The client code is not error free. Even the client still responds to ping from coordinator, the client library may hang or live-locks before completing commit operation. Solution is:

- Watch dog timeout in TM's memory initiates aborting the transaction. (Faster normally this works.)

- If TM crashes, TM automatically aborts all transactions without NRP. To tell undergoing transactions to the recovered TM, we provides a 'startTx' log entry (See next section for the new log entry).

- If we provide object list in 'startTx', TM can selectively send abort requests to the relevant masters.

- Without the list, TM needs to broadcast abort requests to all the masters. (current proposal considering the corner case in next bullet.)

- Following corner case is eventually resolved:

- Client issues MultiCommit

- Some DMs lock objects

- Before TM writes 'startTX' to log, the TM crashes

- The recovered TM will not start the transaction abort.

- However the locks are eventually released:

- TM recognizes crash eventually:

- If the client is still arrive the linearizable rpc resends MultiCommit request to TM. (Faster) Since no startTx is found in log at recovery, TM treat the request as a new request. If some DM has already accepted the original request, it does not cause any problem because client retries the request with the same TxId and DM can tell the request is retry for the object .

- If the client crashed too, coordinator notices the clientId expiration to the TM. (Slower)

- Since TM does not know the DM involved in the transaction, it broadcast Tx abort request to all masters.

- TM recognizes crash eventually:

IV-1-3) New log entries with contents

- TM (Transaction-managementl Master)

These entries for the same client identifier is located on the same master, ie. objectFinder is called by clientId.- TxStart : Contents = (TxId=[ClientId, SeqNo] , ackedSequenceNumber)

- NRP: (TxID, (list of primary keys))

- TxDMDone: (TxId that received all acks from DM)

- TxAbort : Transaction is aborted : (TxID, (list of primary keys)) – not sure???? needed for efficiency or object migration??? If it is needed, it is simple to move 'list of primary keys' to TxStart.

- DM (transaction Data Master)

- Lock: (TxId, Object info= [primary key, version])

- Unlock: (TxId, Object info= [primary key, version])

IV-1-4) Log Cleaning

- TM log

- Regular cleaning) NRP for the client is cleaned up to minimum (sequence number in TxDone@Client, sequence number in TxDone@DM)

- Client lease expiration triggered) Cleaning initiated after interrogating coordinator for the activeness of the clients that the TM manages

- DM log: periodically cleaning following pairs

- Completed transaction: Lock-Unlock pair with the same TxId

- Deleted object: (Object, Lock, Unlock, Lock, Unlock, Lock, ...., Tomb stone ) sequence with the same key and version.

IV-1-5) Linearizable RPC

- Implementing MultiCommit with MultiOp. Since MultiCommit is a conditional operation, it needs to be implemented on linearizable RPC.

IV-1-6) Recovery

- Operation as a Dater Master (DM)

- Recovers unlocked (normal) Objects

- Recovers locked Object according inquiry result to TM identified by the TxId in lock entry.

- Proceeds commits if NRP exists for the TxId.

- Cannot abort the transaction even if NRP does not exist. Because if the ack has already been sent to client, client may decides to go to phase2 (Do Commit). Final decision for the commit should be done by TM. When DoCommit is arrived to TM, the transaction can be ether committed or aborted, TM decides it with crash information it knows at the decision point.

- If the master crashed before ack to MultiCommit request, the client retries the MultiCommit again. The DM checks objects hash in memory, continue the commit operation, and respond the client with Ack or Nack.

- Operation as a Transaction Master (TM)

- Recovers TxDoneClient, NRP, TxDoneDM onto the recovery master hashed by ClientId in these entries.

- Rebuilds in-memory control structure for transactions on recovered TM.

- Recovered TM responds requests or acks.

- Responds to DoCommit from clients, which might be first request or retry

- Receives DoCommitDM acks from DM. The acks may be the result of:

- Acks corresponding to DoCommitDM issued by crashed TM may be discarded because the TM has already been crashed. In-memory information about returned acks is also lost.

- Requests DoCommitDM to DM if the recovered TM finds NRP.

- Responds to DoCommit from clients, which might be first request or retry

IV-2) Corner cases

Corner cases should be resolved as follows:

- Non reversible operations (below) are recorded in Log synchronously

- Written object

- Lock

- Unlock

- Tomb stone

- NRP

- Final decision of commit is delegated to TM as mentioned in IV-1-6 (DM list #2). The decision can satisfy ACID (Atomic Consistent Isolated Durable) constraint.

We would like to list corner cases and verify the strategy works.

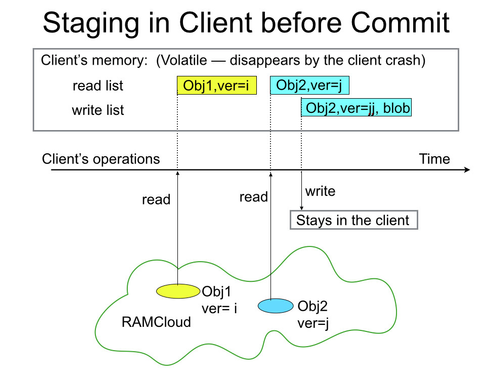

IV-3) Client

- Flow

- Client program constructs MultiCommit object lists with RAMCloud libraries.

- Below are implemented in MultiCommit RPC.

- Sends MultiCommit request to DMs and a TM using linearizable RPC. A new transaction id=<clientId, seqNumber, ackedSeqNumber> is inserted before sending MultiCommit request.

- Waits response of MultiCommit request

- Sends request according the MultiCommit response:

- Sends DoAbort request to TM if any of response is nack

- Sends DoCommit request to TM if all of the responses are ack.

- Waits response of item iii.

- the RPC returns Ack (committed) or Nack (aborted)

- Nack may be trigger trap??

- Data Structure: all the data is in-memory and volatile.

- TxId

- MultiCommit object list

- MultiCommit return list – success and fail state is given for each object

- When the MultiCommit response is returned. The client library scans the response and decides whether it sends DoCommit or DoAbort to TM.

IV-4) D-Master

Normal master works as D-Master too.

- Using linearizable RPC and retry is encapsulated.

- Modifies log structure first. Then corresponding in-memory structure.

- In memory (Volatile) data structure: See Figure IV-3b

- Main hash:

- Add <locked, r/w> in hash entry tell the object is locked.

- Committing transaction map:

- <Tid, Status, numToProcess, numProcessed, (Object List) >

- numToProcess : number of object to be processed

- Phase1: number of objects to be checked: Since some objects would be for unconditional write, it would be smaller than number of object for the DM.

- Phase2: number of objects to be committed.

- numProcessed : number of objects completed. If it matches to numToProcess, DM returns ack.

- Status ether:

- phase1 started

- phase1 acked

- phase2 started

- phase2 acked

- Object List is list of: <status, txOperation, cur-obj, new-obj>

- status: status of the object

- ready

- phase1Passed

- phase1Failed — Phase1 check failed.

- phase2Completed –- Phase2 process (unlock) completed

- txOperation : duplication of operation command given by MultiCommit including:

- transactional operation to the object in Table II-3-b

- version to be compared if the operation is conditional

- cur-obj : pointer to current (committed) object in hash

- new-obj : pointer to overwriting object at successful commit, which is overwritten to cur-obj at phase2.

- status: status of the object

- numToProcess : number of object to be processed

- <Tid, Status, numToProcess, numProcessed, (Object List) >

- Log Structure:

- See Section 'II-2-4' for basic log entry linkage at commit operation.

- See Section 'IV-1-3) New log entries with contents' for introducing log entries in this implementation.

- Figure IV-4a depicts in-memory data structure of DM. (Not quite exact.... )

Figure IV-4a

- Main hash:

IV-4) T-Master

Normally collocates with D-Master.

IV-4-1) Transactional Control Logic

See Figure IV-1-2a for overview.

- Volatile data structure in memory:

- Committing transaction map

- Tid : transaction Id which consists of <clientId, seqNumber>

- Status: transaction status ether:

- Started

- NRP

- Completed : commited transaction whose sequence number is smaller than both of:

- ackedSeqNumber in TxStart object given by MultiCommit

- sequenceNumber in TxDMDone which means phase2 operation on DMs are all completed.

- Aborted : abort request is sent.

- Object list

- Committing transaction map

- Persistent data is located in log: See Figure IV-1-2a

V. Comparison against other implementations)

VI. References)

- Sinfonia [SOSP2007]

- Parallel Processor Design

- Transactional Memory http://en.wikipedia.org/wiki/Transactional_memory

- Speculative Multithread Processor

- NEC Merlot: ISSCC, 2000, http://bit.ly/1ly07Pa

- NEC Pinot: Micro38, 2005, http://dl.acm.org/citation.cfm?id=1100541

Old materials)

Until Nov 12, 2014)

IV-1) Transaction Id

TransactionId is a transaction identifier in T-Master or D-Master for

- memory data entries

- log entries

- TransactionId = <requestId, anchorObjectId>

- requestId = <clientId, sequenceNumber>

- the parameter in commit operation given by the client like a ordinal linearizable RPC

- clientId is given from coordinator

- sequenceNumber is 64bit number and monotonically increase without garbage collection

- Using the same resource management as the linearizable RPC.

- the parameter in commit operation given by the client like a ordinal linearizable RPC

- anchorObjectId

- A hash which specifies T-Master for the transaction

- If the same client issues another transaction including the same anchorObjectId in the live transaction, the issued transaction is immediately aborted because it conflicts any of previous active transactions.

- requestId = <clientId, sequenceNumber>

Log Structure: See Section II-2-5: detailed implementation will be considered soon.

- Object modifiers are used only for log replay and cleaning which follows the new space/computing effective log structure for cleaning.

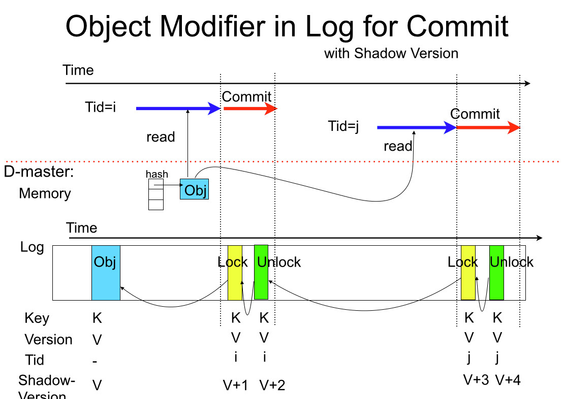

- Tid=<ClinentID, Seq, AnchorObjectID> include no-ordering information between different Tids generated in different clients. We use shadow-version to identify final state of each log entries.

- Object-modifier: We want to re-define each log entry as follows:

- Object: consists of primary-hash, tableID, keys, version, blob, etc

- Object modifier: consists of TransactionID, primary-key, modifierVersion (shadowVersion in the figure), state

State is ether:- tomb stone

- lock

- unlock

- NRP (for transaction state management object)

- All the log entries involved in a transaction must be modified by lock. In log replay, we can recover in-memory control structure for the transactions handled by the recovered TM(Transaction master) or DM (Data Master).

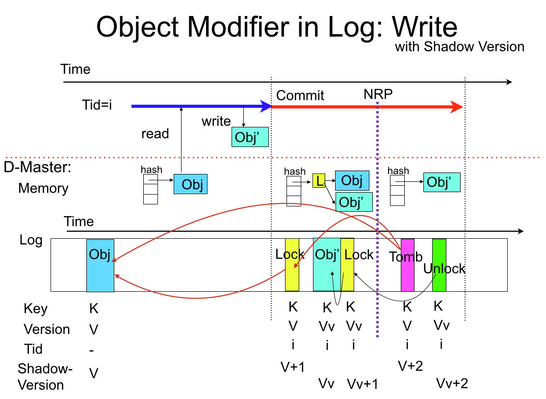

Figure II-4-4a, II-4-4b is modified as followings:

Figure IV-3a: Object state management in Log with shadow version in consecutive reads.

Figure IV-3b: Object state management in Log with shadow version in write.

IV-4) T-Master

Normally collocates with D-Master.

IV-4-1) Transactional Control Logic

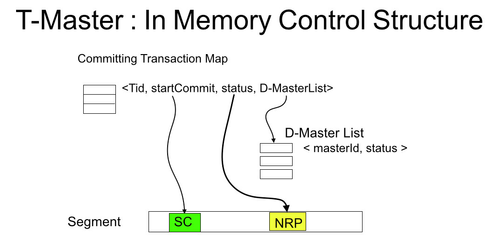

- Volatile data structure in memory: See Figure IV-4-2a

- Committing Transaction Map

- Tid : transaction Id which consists of <clientId, anchorObjectId>

- Master which stores anchorObject is T-Master

- startCommit always points log entry SC (StartCommit; see Figure II-2-2a)

- objectList can be used to verify whether some operation is performed to all objects.

- status points a control object in the log to tell the current transaction status ether:

- StartCommit

- NRP

- Done (Tomb Stone)

- D-Master List points D-Master List.

- Tid : transaction Id which consists of <clientId, anchorObjectId>

- D-Master List contains all D-Masters' information

- T-Master has the D-Master's role. T-Master has a D-Master entry too.

- masterId: id of the master

- status ether of:

- p1acked : received phase1 (can commit?) ack

- p1abort : received abort request from this D-Master

- p2acked : received phase2 (do commit) ack

- Arrival delay of the anchor object to T-Master and ack from D-Master arrivers before. (see section III item 3)

- Can allocate committing transaction map from the ack.

- Get Tid from ack

- Leave startCommit and status Null

- Allocate partial D-Master list which contains only the D-Master which sent the ack.

- The rest of the D-Master list will be allocated when the anchor object arrives.

- Can allocate committing transaction map from the ack.

Figure IV-4-2a (Out-dated)

- Committing Transaction Map

On July 10, 2014)

(’compare & read’ combination has no meaning because we use version number for comparison, instead of value, acquired by a read preceding to the commit request.)

| Command for the Entry | Behavior | Parameter for the Entry | Comment | |||

| compare | read | write | Request | Reply | ||

|---|---|---|---|---|---|---|

| Yes | No | No | Compare | version | None | Check if the object is unmodified |

| Yes | No | Yes | Conditional write | version, blob | newVersion | Write if the object is still in the version |

| No | Yes | No | Read | None | version, blob | Read if the commit succeeds |

| No | No | Yes | Overwrite | blob | newVersion | Write without read if the commit succeeds (renaming for WAR, WAW hazard) |

| No | Yes | Yes | Swap | blob | newVersion | Swap if the commit succeeds |

On July 3, 2014)

II-3. Programming model)

As seen in Figure II-3-a, transition of a transaction is as follows:

- A client starts a transaction and receive a transaction identifier (Tid).

- The client performs object accesses for the Tid.

- RAMCloud system watches access conflict against other transactions.

- The client issues commit operation for the Tid. Only one commit completes successfully and other transaction will be aborted. Operations done by aborted transaction are all cancelled (rewound).

- Read is not treated as a conflict

- If we would like to abort transaction by read-read conflict, we can allocate a separate flag and cause write-write conflict to detect the conflict.

- All other conflict are detected and resolved by renaming or retrying through transaction abort.

- Read is not treated as a conflict

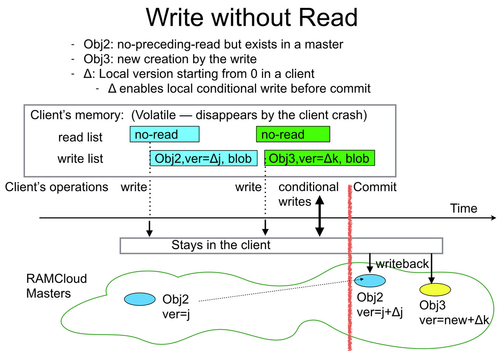

- Version number: (Need discussion for the definition)

- Proposal 1) Using local version number: See Figure II-2-1b

- Create local version number starting from zero for an object written without read. The local version number is a kind of delta of version number.

- Add the delta when the object is written back to the master at commit

- Can perform conditional write locally with the local version number.

- In case that the object is once created and deleted, the definition says global version number must be bigger than previous version. To follow this, scheme, the local version number delta should be given from local next-version-number. The local next-version-number starts from zero when the transaction start and is incremented by one when it is used.

- But it does not match to the global version number rule: Version number is incremented by one with one write access.

- Proposal 2) Refer the master version number: see Figure II-2-1c

- Pros)

- This exactly follows the current definition of version number increment.

- Cons)

- Additional rcp needed to fetch version number from a master.

- Needs to mark the version number is acquired not by the preceding read. Otherwise, needless RAW conflict is detected which leads the transaction abort.

- Pros)

- Proposal 1) Using local version number: See Figure II-2-1b

- No support:

- Single transaction owned by multiple clients.

Figure. II-3a

II-4. Design Outline)

- Commit is a linealizable operation containing a large chunk of object to be conditionally updated. We can apply the idea for linializable operation on RAMCloud (see liniarizable+RPC)

- Client allocates a unique transaction identifier locally using its unique client id (see liniarizable+RPC)

- No priority is given by the start order of transactions (aka. age of transaction) such as Sinfonia [SOSP2007].

- When multiple transactions have object-access-conflicts, the earliest commit has priority and success.

- We assume probability of access conflict can be kept small with low latency system.

- If some users assume a quite long life transaction and need to avoid its cancelation by another transaction started later, they can implement a sophisticated transaction management system upon our simple transaction model.

- All the write data before commit is stored in the client and volatile.

- We can update data in masters with multi-writes for performance.

- During commit operation, we define a NRP: Non-return-point. Before NRP, a transaction is aborted by any crash. After NRP, the transaction is committed regardless of any crash or recovery.

II-4-1. Design Issues)

- Before commit

- Assigns of transaction Id (Tid)

- Stores temporal data store in client

- Records read information (key, version) — Figure II-4-1a

- Records write information (key, version, blob)

- Even write follows read, both read-version and new-version are recorded. Read-version is used to verify conflict on the object — Figure II-2-1a

- For write without reads. We just records 'no-read-proceeded' to detect WAR, WAW, which is pseudo conflict and can be handled without transaction abort.

- If read follows the write-without-read, the result only depends on the cluster local write, the sequence is isolated from external events.

- We do not need to interrogates read-version if read is not executed before write, which was originally considered in Figure II-2-1b, which is:

- Client interrogates the server for the current version to verify its modification at commit.

- If the write crates a new object, a version-reserve-object is allocated in the server and log to record the version number of the new object for the conflict detection at commit time.

- Commit operation (see section II-2-2 )

- Fast commit: 1.5 RTT and two log write

II-4-3. Interaction between transactional and non-transactional operation)

Figure II-2-7a

- Order of operations. See Figure II-2-7a

- Logical view) According to the definition of commit, Op-T is atomically executed at some period in commit.

- Transactional operation is logically executed in ACID (Atomic, Consistent, Isolated, Durable ) at commit time.

- Non-transactional operation is executed at the access time which is categorized to:

- Op-N occurs before commit and within commit before the object is locked.

- Op-NC occurs after the object is locked.

- Practically)

- If Op-T is read, it is physically performed at some point after StartTx and the version read is recorded in transaction.

- Written objects are eventual updated within some range in commit operation.

- Order of operations:

- Suppose Op-NC is the non-transactional operation physically occurs after the modification-check of commit.

- Logical order is first) Op-N, second) Op-T, third) Op-NC

- Logical view) According to the definition of commit, Op-T is atomically executed at some period in commit.

- Interaction of non-transactional operation to transactional operation

- Follow conflict matrix in Table II-2-3a (RAW, WAR, WAW)

- Conflict between Op-N and Op-T. See Table II-2-7b

- Simplest implementation and natural behavior

- Only Op-N-written to Op-T-read (RAW) results abort.

- The no-read-proceeded is recorded when no read for the object proceeds writes. It can be used to distinguish RAW from WAW.

- By requesting non-transactional operation to a master without interacting client's local transactional information, the above behavior i) is realized.

- Simplest implementation and natural behavior

- Conflict between Op-T and Op-NC. See Table II-2-7c

- Should be consistent to the behavior before commit.

- Minimizing the performance degradation of traditional operations. (See - III) Implementation )

- Commit completed in a short period of time. Probability of conflict between commit and Op-NC is very small.

- Add two bits <lock-for-commit, r/w> in hash entry in order to:

- Blocking non-transactional-write before the ongoing commit is completed or aborted.

- Continue non-transactional-read if the lock is for read commit.

- Blocking non-transactional-read if the lock is for write commit

| Op-N (always completes) | |||

| read | written | ||

|---|---|---|---|

| Op-T | read | continue | abort (True dependency, RAW) |

| write | continue | continue | |

| Op-T (locked for commit) | |||

| read | written | ||

|---|---|---|---|

| Op-NC | read | continue | block (wait) |

| write | block (wait) | block (wait) | |

IV-2) Client

IV-2-1) Data Structure

All data is located in memory.

Figure II-2-1a and Figure II-2-2a describe the overall picture.

Detail: TBD.

Before July 3, 2014)

- Simplified:

- Removes prioritizing transaction with Transaction state order. The transaction is prioritized by commit order. This enables storing data in client local buffer and write data multi-write operation at commit time. We think user can build their own transaction prioritizing code with RAMCloud transaction if considerable performance loss would be concerned.

- Not involving coordinator with using 2 phase commit with pessimistic lock. A solution for lock clean up is done with timeout.

- For commit operation, we are investigating multiple options to implement transaction manager (TM). I am going to classify and investigate performance issues, etc:

- Locate TM in user library

- Pros)

- Smaller ( ? ) network traffic at commit

- Cons)

- Needs Non-Return-Point (NRP) repository and incurs the communication overhead.

- Application recompilation is needed when the transaction algorithm would be modified.

- Pros)

- Locate TM in a master (which we hereafter name transaction master)

- Pros)

- No communication to NRP repository is needed

- Object code is independent with transactional algorithm

- Cons)

- Data is hopped by Transaction commit master

- Pros)

- Locate TM in user library

- There are many similarities between transaction and secondary key. We are going to share the idea.

Previous version)

- Proposal on Oct. 24, 2013 : 20131023_TransactionOnRAMCloud_r0_64.pdf

- Proposal on Oct. 21, 2013 : 20131017_TransactionOnRAMCloud_r0_63.pdf

- Proposal on Oct. 17, 2013 : 20131017_TransactionOnRAMCloud_r0_62.pdf

- Proposal on Oct. 16, 2013 : 20131016_TransactionOnRAMCloud_r0_61.pdf

Still the proposal is under revise for below: (Updated on Jan. 29, 2014)

- traditional transaction system

- requirement for transaction

- priority : – Earlier commit wins similar to Sinfonia.

- should prioritize for older transaction? or early commit would overtake older transaction?

- should minimize re-execution? – According to (a) no optimization to reduce re-execution would be implemented so far.

- lock or rewind to final consistent point?

- should complex or simple? – Take simple one. RAMCloud philosophy is to reduce latency with simple implementation, which I think reduces possibility of conflict of transaction with well organized code.

- priority : – Earlier commit wins similar to Sinfonia.

- find missing racing conditions and solutions

- implementation proposal: will be re-written.

- recovery sequence — done

- control sequence — writing

- data structure – writing

- optimization (TBD)

- assumption of transactions and examples - done

- related work

- 3 phase commit by Stonebraker, Quorum commit, Consensus algorithm

- Sinfornia, H-Store, SDD-1 ----- writing

- sharding, micro-sharding ---- writing

- ACID, CAP theorem –- writing

- benchmarks